Why Weights Are the Wrong Place to Store Experience

The dominant paradigm in artificial intelligence encodes everything a system learns into the same structure it uses to compute. This conflation creates problems that scale cannot solve.

Read more

Understanding new forms of intelligence requires openness and dialogue.

Our resources bring together research signals, applied insights, and industry perspectives

as we continue developing continuous learning systems in real environments.

The dominant paradigm in artificial intelligence encodes everything a system learns into the same structure it uses to compute. This conflation creates problems that scale cannot solve.

Read more

Intelligence is not the elimination of uncertainty but the ability to act coherently within it.

Read more

An exploration of why continuous learning requires architectural separation of new and old knowledge, rather than just temporal depth.

Read more

Intelligence is not a static property that a system either has or lacks. It is a process with a temporal structure. We explore how systems relate to their own past, present, and future.

Read more

Three approaches to building more general artificial intelligence are now visible, but they diverge on whether learning should flow from building a rich pretrained world model or from interactive consequence.

Read more

Intelligence is a process embedded in time: prediction under consequence. We explore why more general forms of artificial intelligence will likely require architectural properties that couple learning directly to ongoing interaction with changing environments.

Read more

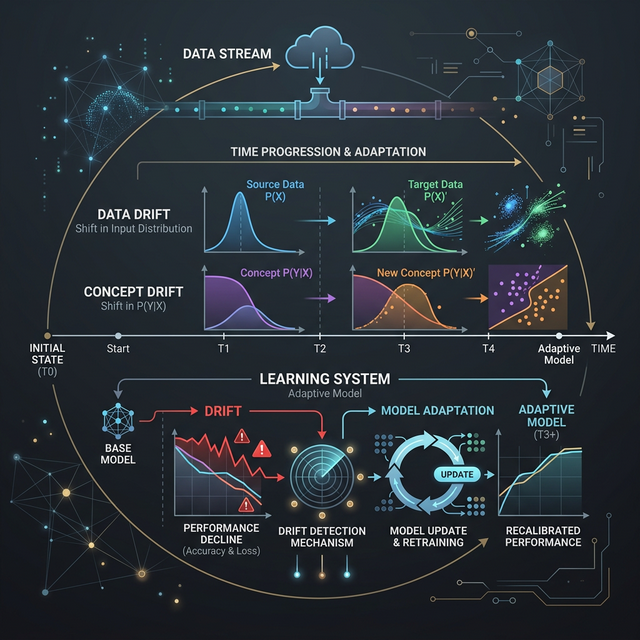

Learning systems face challenges when the statistical patterns of the world evolve faster than the model can adapt. We explore data, label, and concept drift.

Read more

Understanding the distinction between stateful and stateless systems helps clarify an important architectural question in artificial intelligence.

Read more

Treating uncertainty as a design constraint is essential for continually learning systems. A system that updates its internal state over time cannot postpone uncertainty until after the fact.

Read more

An intelligent system shouldn't necessarily require static, pre-programmed knowledge to solve novel future problems.

Read more

In systems that learn continuously, safety must be evaluated differently. It must apply to the mechanism of change itself, rather than just a favorable snapshot of present behavior.

Read more

Biological intelligence emerged inside continuous signals and causal time. We explore what the digital, discrete abstractions of modern machine learning assume away.

Read more

Prediction becomes exponentially harder when the underlying rules shift frequently. Continuous coupling to consequence is an architectural necessity, not just a feature.

Read more

Intelligence is best understood as action-conditioned prediction under consequence. Discover what this framing implies for the future of AI architecture.

Read more

A first-principles clarification of continual learning as a runtime property: selective adaptation under consequence, distinct from retraining cycles, scale, or constant parameter drift.

Read more

Strong generalization can still fall short of true intelligence. We examine the critical difference between representational breadth and adaptive coupling to consequence over time.

Read more

A first-principles exploration of why intelligence emerged, how prediction and resource allocation shape adaptive behavior, and what this implies for the future of artificial intelligence.

Read moreWe are currently engaging with investors and strategic partners interested in long-term technological impact grounded in scientific discipline.

Elysium Intellect represents a fundamentally different approach to artificial intelligence, prioritising continuous adaptation, reduced compute dependence, and real industrial application.

Conversations focus on collaboration, evidence building, and shared ambition.

Start a conversation